Qwen3-1.7B-Wiki: Multi-turn RL to play the WikiGame

This post explores training a small language model (Qwen3-1.7B) to play the Wikipedia game through multi-turn reinforcement learning. A baseline performance of 13.5% was improved to 49.4% through SFT followed by Group Relative Policy Optimization (GRPO), but was unable to beat GPT4.1-mini or DeepseekV3. Model scale remains a fundamental constraint for tasks requiring complex multi-turn planning and adaptation, suggesting that architectural limitations cannot currently be fully overcome through training methodology alone.

Intro

LLMs have shown remarkable capabilities in solving complex reasoning tasks, but can smaller models learn to navigate multi-turn problems through reinforcement learning?

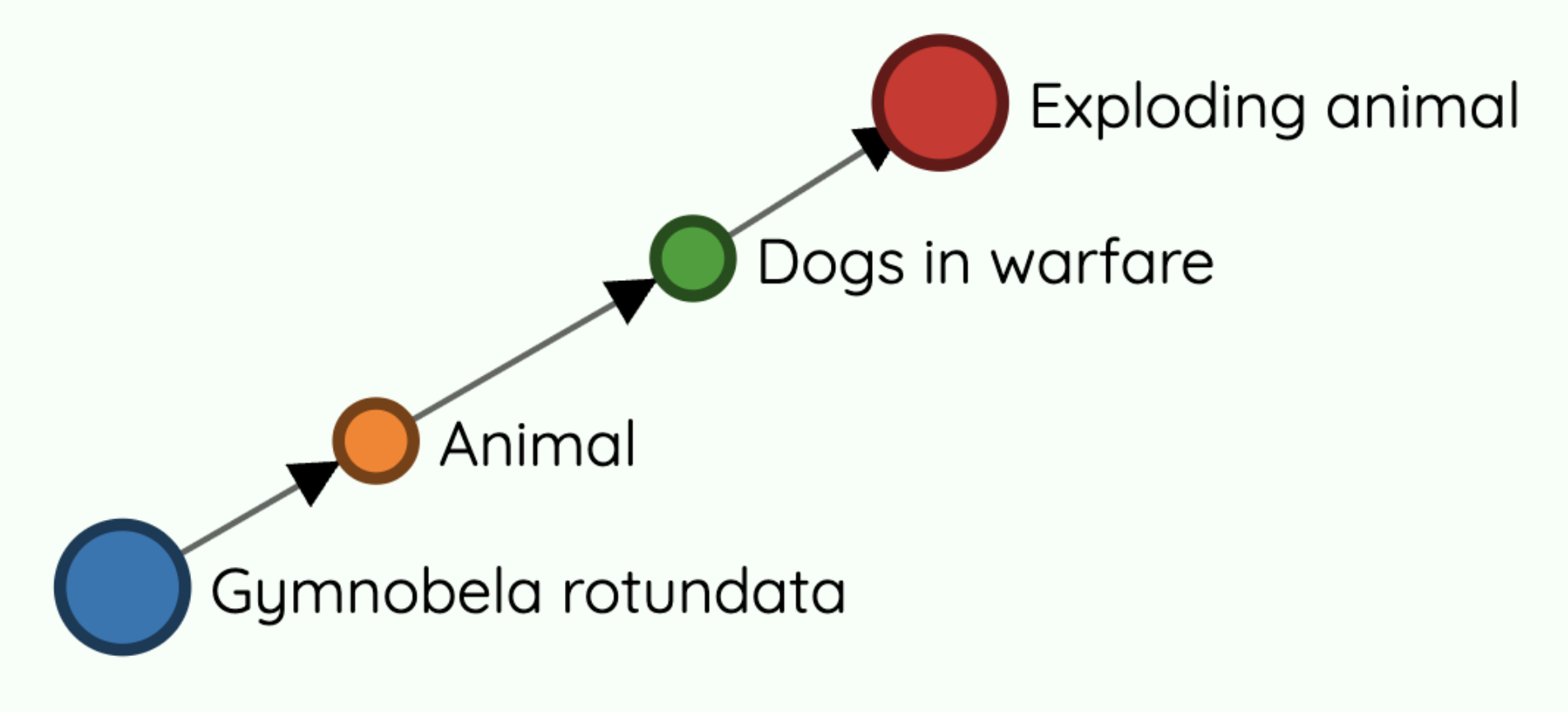

The wiki game/wiki racing game/wiki links game/six degrees of wikipedia is a game where players must navigate from one Wikipedia article to another using intermediate article links only, the goal is usually to do this in the fewest steps or the fastest time. If you've ever played before, you'll notice that most articles can be reached in surprisingly few steps. Consider the case of Gymnobela rotundata (sea snail species) → Exploding animal which can be achieved in just 3 steps.

A simple strategy is to find a way to a "big" article with many outbound links like United States or Youtube and branch off closer to the domain of the target article. I would wager that most humans could solve 99% of wiki game pairs in under 12 steps using this approach and even fewer steps for an optimal strategy (a 2018 post suggests this to be closer to 3 to 4 on average). Unsurprisingly, LLMs can perform fairly well in the game since it mostly boils down to making connections between different topics.

Data

There are two sets of data utilized:

- English wikipedia articles containing the full list of outbound links

- Article pairs to run the game with. e.g. Gravity → Royal Canadian Mounted Police or Nvidia → Impressionism

Fortunately, the Wikimedia foundation has provided a structured snapshot of English Wikipedia from September 2024. We'll just do some processing to extract the urls from the nested sections. This ended up being ~14GB when stored in DuckDB due to large number of articles (~7 million) and links in each article.

It's also important here to make sure that we filter out dead links to prevent the model from clicking on them. Dead links are tricky to tackle due to the sheer number of articles, so the lazy way is to filter them out (i.e. check for existence in the database) as needed whenever we pass the links of the current article to the model.

As for the second dataset, the WikiSpeedruns website contains over 1300 different prompts that real humans have played and lists some statistics like the shortest path length (and the solution path) and average clicks that could serve as a reasonable proxy for how difficult a given pair is.

Data validation

Let's make sure the article pairs we've gathered actually have valid paths. The game is fundamentally a graph problem (BFS or a modified bi-directional BFS) but since we already know a valid path (thanks to human players!), we just need to run through this path and check that every article is valid/exists and has a link to the subsequent article in the chain. If the chain is broken, then we can do a BFS to the end article and verify that at least one path exists. Running this for all the pairs shows that they all have a valid path between them.

Environment

Task Formulation

Why does the Wiki game make a good RL task?

- Real knowledge application - Models must leverage their training on Wikipedia data and general world knowledge while learning new navigation strategies

- Verifiable outcomes - The task is trivially verifiable. Even if a given task has a large number of possible solutions as long as it can be verified, the model can learn from it. This applies not just to simple RL environments like gsm8k, puzzles (wordle, NYT connections, sudoku, etc) but also to potentially more complex tasks like protein structure prediction or algorithm optimization (AlphaEvolve).

- Scalable difficulty - The task difficulty and complexity can be very easily varied depending on the model and/or capabilities we are testing:

- Easy: For a given article, provide the full list of outbound article links and no other content.

- Medium: For a given article provide all section contents, but urls are embedded within the text in markdown format.

- Medium-hard: Similar to above but only provide one section (and its urls) at a time and have the model request additional sections through a tool call.

- Hard: Emulate a real wiki game session by having the model use a browser and scroll through articles and click links. This moves the task beyond text and into the multimodal realm.

Tools and Gameplay Loop

We're going to provide two tools:

click_link: Click the most promising link and move to a new article, presenting the list of new article links to the model.hint: Provides the abstract of the goal article, useful in cases where the model might be stuck, doesn't recognize or is confused on what the goal article is.

An initial run with Qwen3-1.7B with the pair Hippopotamus → Hippocampus yielded the following reasoning trace:

To get from "Hippopotamus" to "Hippocampus", I need to find a path that connects these two related but distinct animals. Since "Hippocampus" is a type of fish, I should look for links in the current article that might lead to aquatic life or fish.

This is particularly interesting because Hippocampus is the genus name for seahorses. In this case, however, it refers to the region of the brain.

On each successful click_link the model is presented with the following JSON result:

{

"start_article_title":"Benjamin Netanyahu",

"goal_article_title":"Ben 10",

"current_article_title":"CNN",

"available_links":[

"1080i","2008_Tibetan_unrest","A2_CNN","ABC_News_Radio", "..."

]

}We set a maximum of 15 turns before we cut the model off.

Model selection and Benchmarking

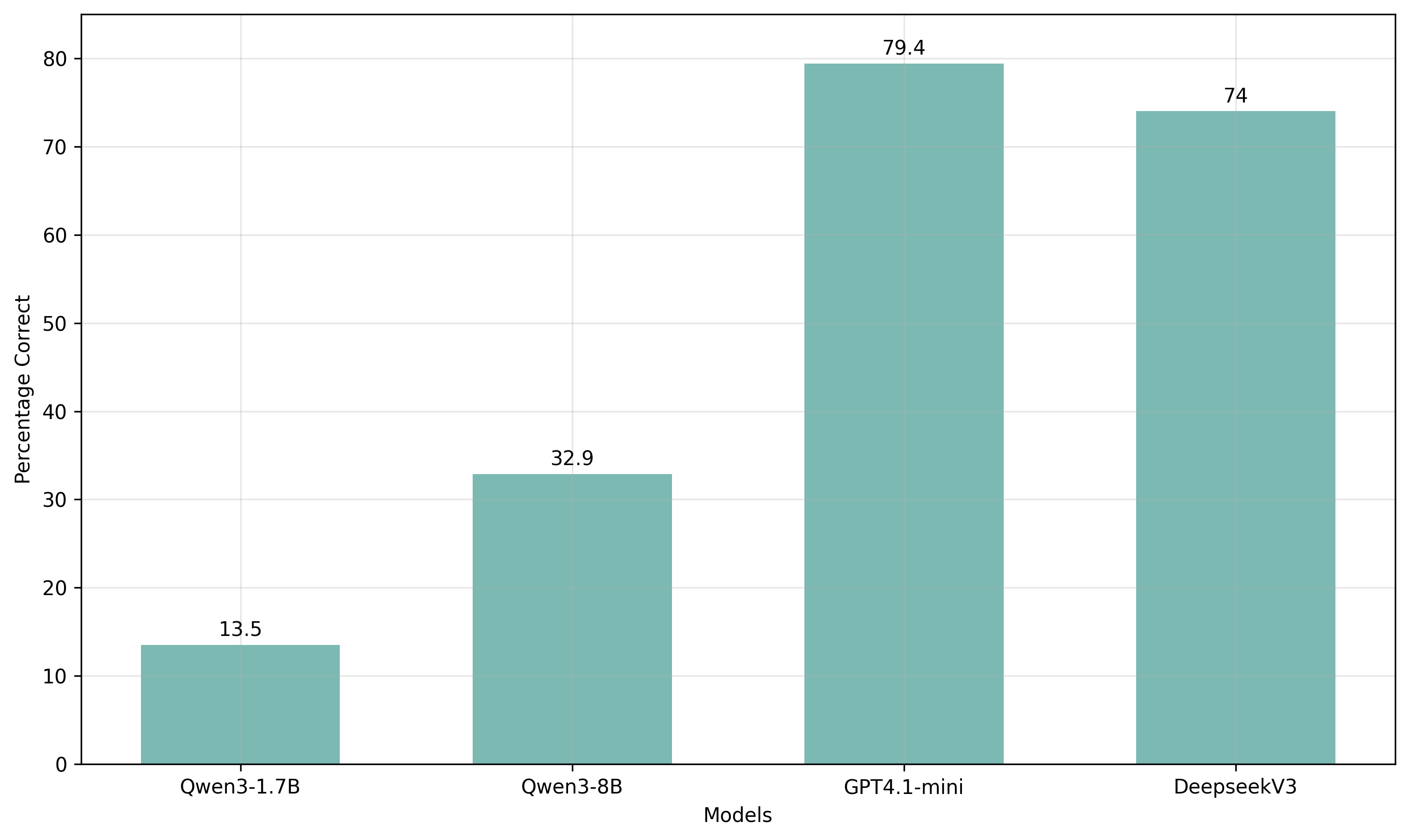

Let's first measure the performance of models using our environment. The contenders are:

- GPT-4.1-mini

- DeepseekV3

- Qwen3-8B

- Qwen3-1.7B - we're going to train this smaller 1.7B instead of its larger 4B or 8B siblings due to favorable cost, training speed and memory requirements. The 13.5% baseline also shows capability but room for improvement

Reward Function

We'll need to define a good reward function to incentivize the model to learn properly, but we can keep it relatively simple:

Successful Path

Did we reach the ending article within the given number of turns? We can also include a small step penalty here to reward shorter paths. This should have the largest weight (1.0), since it is ultimately what we want the model to learn.

XML Formatting

<think></think> <tool></tool>

We want to reward following our XML schema correctly, but only for correct usage. This will have a smaller weighting of 0.2, just enough to incentivize.

Correct Tool Use

Correct tool call formatting is necessary but not enough. The model should also understand how to use the tool and not hallucinate arguments, e.g. calling click_link on an article that isn't linked. Empirically, weighing this anywhere from 0.5 to 1.0 worked well, but removing it caused the hallucination rate to increase.

Reward Hacking

Make sure your rewards are unhackable or the model will find a way to hack it. An early iteration of the tool use reward measured the number of incorrect tool calls and gave full points if there were zero incorrect tool calls, so the model quickly learned that the easiest way to get a perfect score was to never call any tools, achieving zero incorrect tool calls.

R_total = 1.0 × R_success + 0.2 × R_format + 1.0 × R_tool

Training

But first, SFT

Qwen3-1.7B is capable of completing the task, but has a big issue with incorrect formatting of tool calls and overthinking, which tends to overuse phrases like "Wait" or "Alternatively", leading to minimal exploration. We can fix this by doing a round of SFT using rejection sampling on DeepseekV3's trajectories, taking only the samples where the model succeeded at the task with a high reward on both formatting and correct tool use. DeepseekV3 is ideal for this because it performs well and the CoT is fairly terse. Around 200 high quality trajectories were generated from DeepseekV3 and used in SFT.

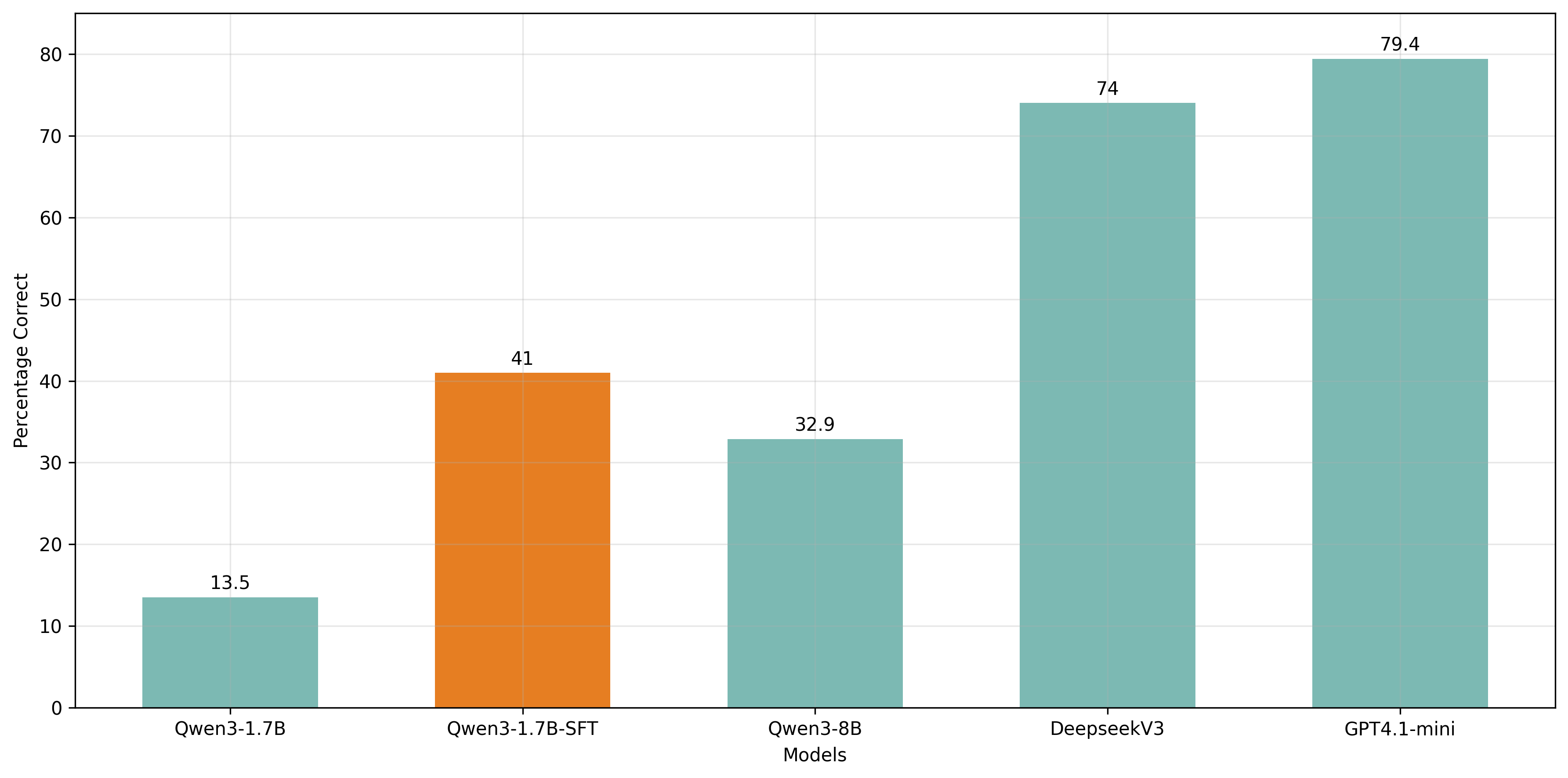

With just SFT, pass@1 performance already improves drastically from 13.5% to 41%.

This is honestly the biggest lesson from this project: SFT on good trajectories does most of the heavy lifting. RL then polishes the rough edges. I suspect this SFT-then-RL pattern will become standard for these kinds of tasks--you need the model to be "in the ballpark" before RL can meaningfully optimize. This indicates a three-stage post-training pipeline: SFT for basic capability, some kind of preference tuning, then RLVR for task-specific polish.

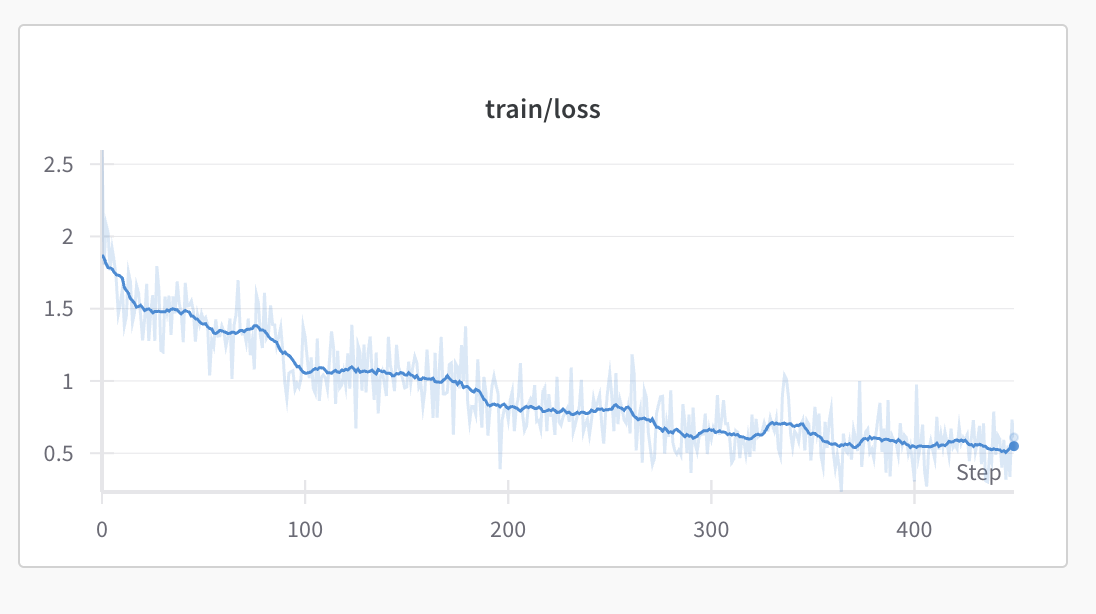

Let's go GRPO

Now that we have a good starting point from SFT, we're going to use Group Relative Policy Optimization (GRPO). Conceptually, GRPO improves the policy by generating multiple responses per prompt and nudging the model to prefer generations that score higher rewards. We'll use Will Brown's verifiers library, a simple and hackable library, to do multi-turn GRPO training.

After many training runs, the best run was yielded by the following setup:

- 2x3090 (1 for vLLM inference, 1 for training) for 8-9 hours with 1000 steps on a training set of 900 prompts

- Constant Learning Rate 1e-6 with warmup steps of 10

- Per device batch size = 4 with 8 generations per group

- Gradient Accumulation Steps = 2

- max_grad_norm = 0.001

- KL coefficient (beta) = 0.001

- μ = 2 i.e. one-off policy step per batch

Results

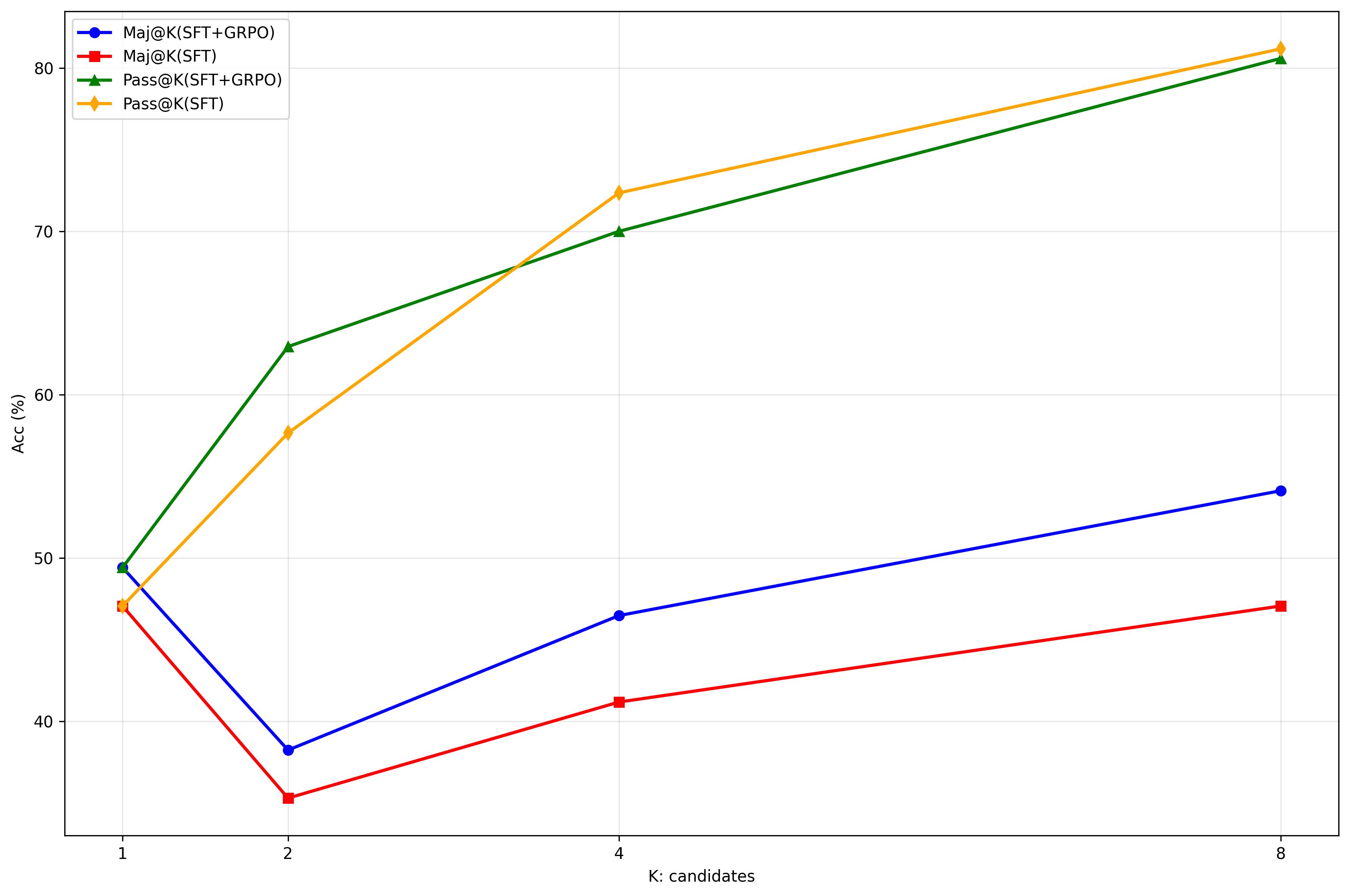

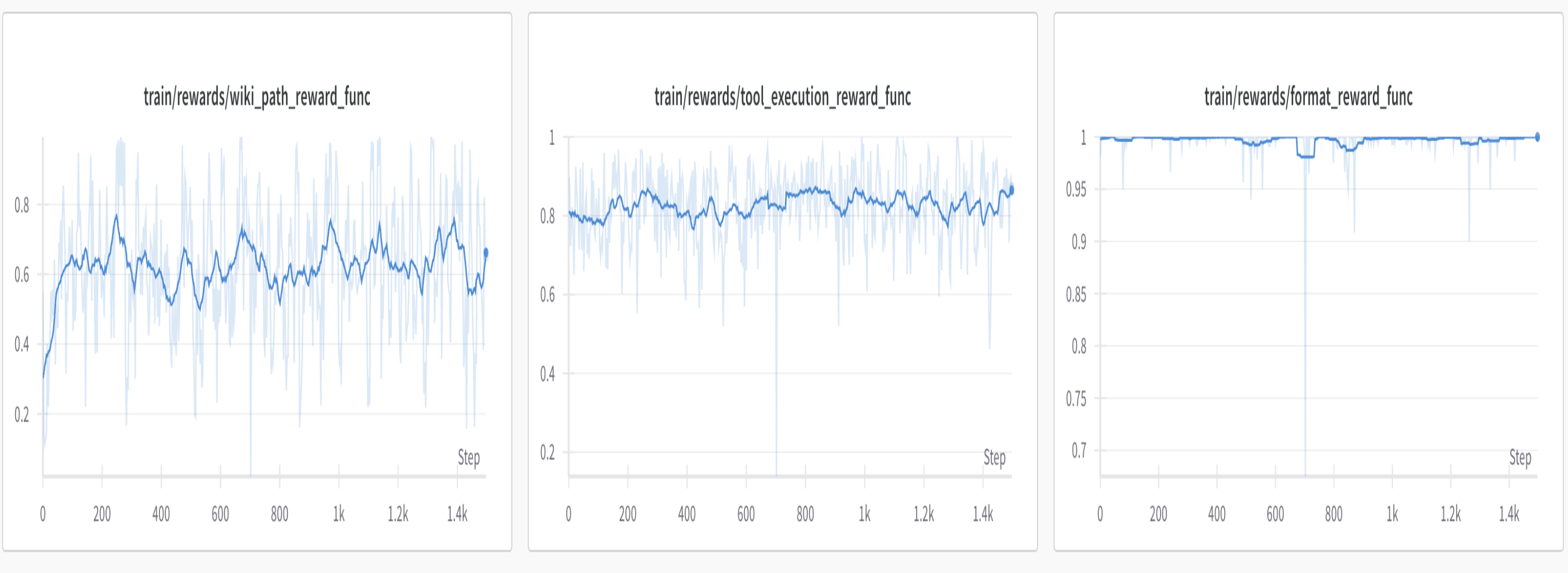

Evaluating Pass@K and Maj@K, RL enhances the Maj@K performance but not Pass@K compared to SFT. In fact the SFT'd model performs slightly better at higher Pass@K. This finding is similar to the observations in the DeepseekMath paper. At Pass@8, both the SFT and RL models outperform the best model, GPT4.1-mini, exceeding its 79.4% accuracy.

Pass@K values are impressive at higher values, but Maj@K and Pass@1 (49.4%) still pales in comparison to 4.1-mini and DeepseekV3. Qwen3-1.7B is capable but inconsistent, which is evident in the high variance shown in the success reward. RL is able to partially address the gap between Qwen3-1.7B and the other models but there are diminishing returns due to the model's small size.

Formatting and Tool use

XML formatting is near perfect but there is still trouble with correct tool calls, which could be attributed to verbose JSON inputs leading to high rates of tool arg hallucinations. Providing a dense list of URLs in JSON format may be suboptimal compared to a simpler format such as a numbered list where clicking a link involves providing the list index.

Hint tool

We provided the model with a hint tool that would provide the abstract of the goal article, but this was only used 0.1 out of 15 allotted turns. This minimal usage likely stems from two factors: first, most goal articles have self-evident topics that don't require clarification and second, the model exhibits overconfidence in its domain knowledge, preferring to act on assumptions rather than verify them.

Minimizing Steps

Our reward function included a per-step penalty intended to encourage shorter paths, but this proved ineffective--both SFT and RL models converged on an average path length of 6 steps.

Reasoning Patterns

Qwen3-1.7B exhibits a characteristic planning approach: it identifies intermediate topic areas that bridge the semantic gap between start and goal articles, but operates with a very loose heuristic rather than concrete multi-step plans. While these strategies are locally viable, the model lacks the kind of detailed path planning that humans would employ.

To get from "Allusion" to "Pinyin", I need to find a path that connects literary concepts to a system of writing for a language. The most promising link in the current article seems to be "Virgil", as he wrote "The Aeneid" which is a significant literary work. From "The Aeneid", I might be able to find links to linguistics or phonetics, which could eventually lead to "Pinyin".

Additional Experiments

Partial Credit: In order to provide denser rewards, partial credit can be given even if the model didn't get to the goal but "seems" to be going the right way. Two types were considered: embedding similarity and rewarding any non-circular path. Partial credits did not appear to matter much since the model was already capable of being correct, at least enough to learn from it.

Tuning Sampling Params: Increased diversity through higher temperature could lead to a more optimal learned-strategy. With temperature = 1.0, top_p=1.0 and top_k = None, it starts off well but rewards start to drop after 300 steps. Over-leveraging exploration at high temperatures appears to compromise consistency.

Curriculum Training: Slowly increasing the difficulty of problems based on human performance. Rewards start off strong with easy problems but drop as harder problems are introduced. The model does not appear to learn any sort of new strategy after seeing "easier" problems.

Learning Rate: Increasing the learning rate too much, from 1e-6 to 2e-5, yields a very quick collapse.

Reflection

In hindsight, I expected that RL would easily make Qwen3-1.7B competitive with the other models that we benchmarked, but it should've been obvious that a small 1.7B model wouldn't magically get a +30% improvement. Especially considering a task that requires 1) significant multi-turn planning and 2) the ability to adapt to unexpected situations e.g. going down bad paths could require a completely new plan. It is evident that the model has all the right knowledge to succeed at the wiki game task but perhaps a slightly larger size (8B or even 4B) could boost the consistency to top our benchmark.

On RL Training

I find that a couple obvious things are essential to a good RL training experience:

- Stringent initial evaluations of both the model, prompts, and the environment. You should identify gaps such as a task being too difficult or convoluted, areas the model struggles with that may require SFT warm-up or additional rewards, specific reasoning patterns or behaviors that need to be clamped down, or just general bugs in your setup.

- Optimize environments and rollouts to be as fast as possible. RL ultimately is a game of patience waiting for the model to hill climb your metric. Once you move into multi-turn, multi-agent this waiting time increases drastically, so do yourself a favor and optimize.

- Garbage in, garbage out. Data quality is critical. Clean your data and keep your dataset diverse.